How to Link Multimedia Files in INTERACT Software

Learn how to effectively manage and link multimedia files to observational data in INTERACT, ensuring seamless access across different platforms and team environments.

Learn how to effectively manage and link multimedia files to observational data in INTERACT, ensuring seamless access across different platforms and team environments.

Learn how Mangold creates professional usability lab solutions for conducting user experience research and usability studies, featuring synchronized video recording and eye tracking capabilities.

Comprehensive overview of Mangold's training programs covering data collection, analysis, and visualization for observational research and multimodal studies.

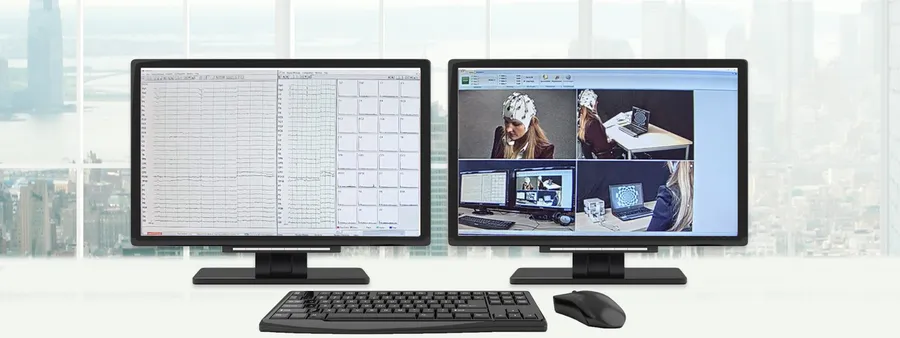

Learn how to integrate high-end EEG recordings with video-based observations in a single study, combining behavioral observation and EEG measurement for richer research insights.

Discover how professional video analysis provides reproducible research results with precision and efficiency. Learn about effective approaches used at TU Braunschweig with Mangold INTERACT.